I think that [Judea’s work] is

going to change the world. –

Stuart Russell, Judea Pearl Symposium, 2010

In these lab exercises, you work with Bayesian networks.

Bayes Networks Review

This exercise reviews the burglary example from the text (Figure

14.2).

Exercise 6.1

Do the following exercises based on AIMA’s burglary

example given in Figure 14.2.

Download the following sample code: lab1.py.

Verify that the code produces the correct answers for

the following examples from the text and lecture:

- P(Alarm | burglary ∧ ¬earthquake)

- P(John | burglary ∧ ¬earthquake)

- P(Burglary | alarm)

- P(Burglary | john ∧ mary)

Be sure that you can explain these answers (i.e., by

reviewing your class notes or the text, Section 14.4.1).

Conditional Independence

The following exercises demonstrate conditional independence using

Bayesian networks that have varied topologies. See Section 13.5.2 for

a discussion of conditional independence. The first exercise concerns

a two-test cancer example.1

Exercise 6.2

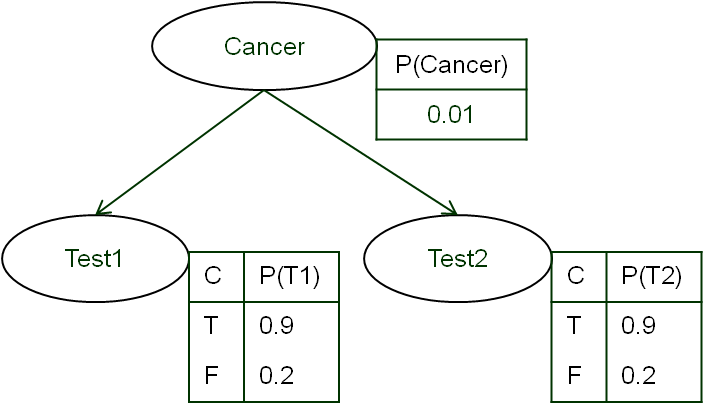

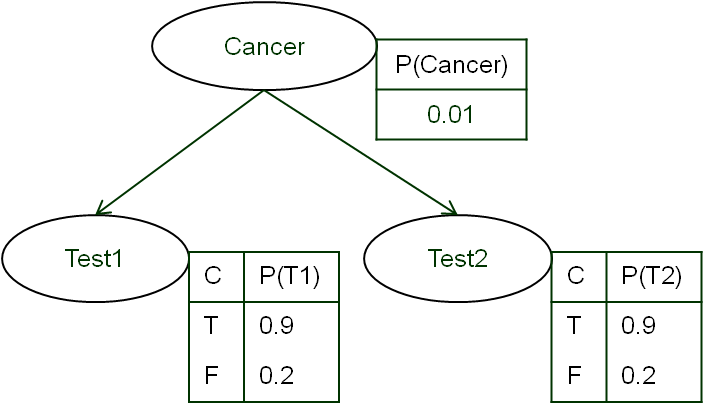

| The Bayesian network shown on the right represents a cancer

domain in which two different cancer tests can be run and in which

the tests are considered to be conditionally independent of one

another.

Implement this network and use it to compute the following

probabilities:

- P(Cancer | positive results on both

tests)

- P(Cancer | a positive result on test 1,

but a negative result on test 2)

Do the results make sense? How much effect does one failed

test have on the probability of having cancer?

Be sure that you can explain your answers (i.e., by working

them out by hand).

|

|

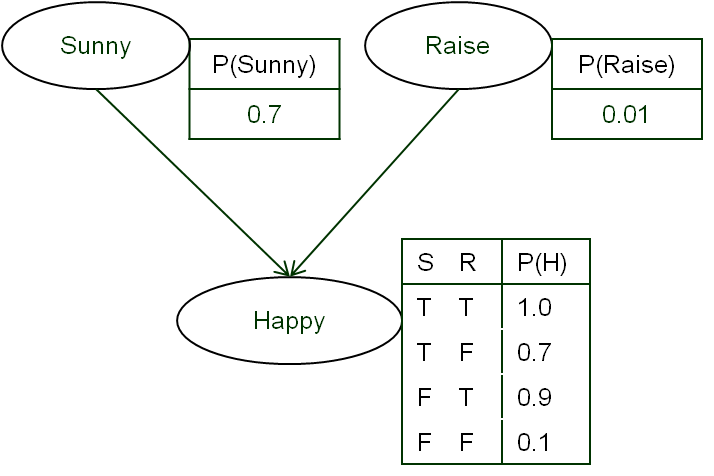

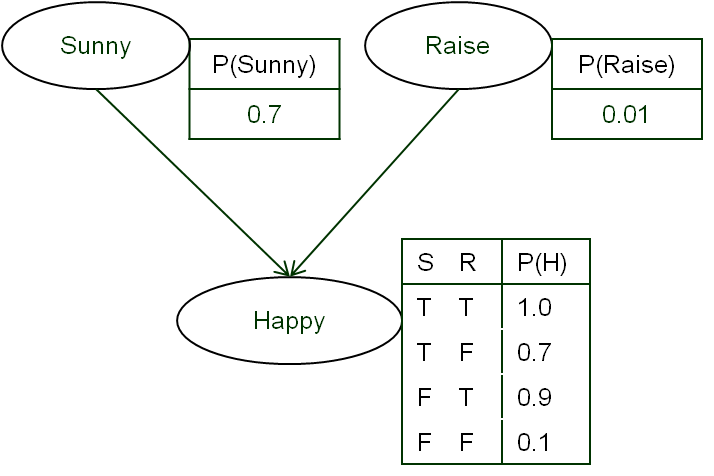

The second example concerns a two-cause happiness example.2

Here, the causes are conditionally independent as well, but their

probabilities can influence one another during inference.

Exercise 6.3

|

The Bayesian network shown on the right represents a

happiness domain in which either the sun or a raise in pay can

increase the happiness of an agent.

Implement this network shown and use it to compute

the following probabilities:

- P(Raise | sunny)

- P(Raise | happy ∧ sunny)

Be sure that you can explain these answers by hand. Use your implementation to compute the following

probabilities:

- P(Raise | happy)

- P(Raise | happy ∧ ¬sunny)

Do these results make sense to you? Why or why not? We

leave working these problems out by hand as an exercise for

later.

|

|

Approximate Inference Algorithms for Bayesian Networks

The exact inference algorithms used above tend to be intractable

in real problems, so approximation algorithms are necessary.

Exercise 6.4

Rerun your the inferences specified in the previous exercises

using rejection sampling (see Figure 14.14), likelihood estimate (see

Figure 14.15), and Gibbs sampling (see Figure 14.16). Do the results

match those of the exact inference algorithms? Why or why not?

Checking in

Submit your source code as specified above in Moodle under lab 6.

1 This example is taken from Thrun’s two-test cancer

example.

2 This example is taken from Thrun’s

confounding clause example.

Back to the top